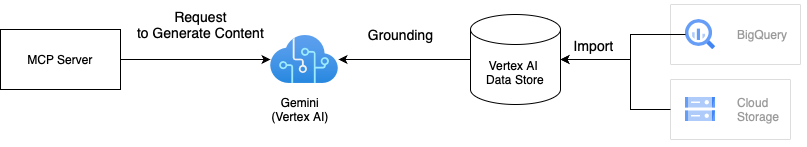

This is a MCP server to search documents using Vertex AI.

This solution uses Gemini with Vertex AI grounding to search documents using your private data. Grounding improves the quality of search results by grounding Gemini's responses in your data stored in Vertex AI Datastore. We can integrate one or multiple Vertex AI data stores to the MCP server. For more details on grounding, refer to Vertex AI Grounding Documentation.

There are two ways to use this MCP server. If you want to run this on Docker, the first approach would be good as Dockerfile is provided in the project.

# Clone the repository

git clone git@github.com:ubie-oss/mcp-vertexai-search.git

# Create a virtual environment

uv venv

# Install the dependencies

uv sync --all-extras

# Check the command

uv run mcp-vertexai-searchThe package isn't published to PyPI yet, but we can install it from the repository.

We need a config file derives from

# Install the package

pip install git+https://github.com/ubie-oss/mcp-vertexai-search.git

# Check the command

mcp-vertexai-search --help- uv

- Vertex AI data store

- Please look into the official documentation about data stores for more information

# Optional: Install uv

python -m pip install -r requirements.setup.txt

# Create a virtual environment

uv venv

uv sync --all-extrasThis supports two transports for SSE (Server-Sent Events) and stdio (Standard Input Output).

We can control the transport by setting the --transport flag.

We can configure the MCP server with a YAML file.

uv run mcp-vertexai-search serve \

--config config.yml \

--transport <stdio|sse>We can test the Vertex AI Search by using the mcp-vertexai-search search command without the MCP server.

uv run mcp-vertexai-search search \

--config config.yml \

--query <your-query>serverserver.name: The name of the MCP server

modelmodel.model_name: The name of the Vertex AI modelmodel.project_id: The project ID of the Vertex AI modelmodel.location: The location of the model (e.g. us-central1)model.impersonate_service_account: The service account to impersonatemodel.generate_content_config: The configuration for the generate content API

data_stores: The list of Vertex AI data storesdata_stores.project_id: The project ID of the Vertex AI data storedata_stores.location: The location of the Vertex AI data store (e.g. us)data_stores.datastore_id: The ID of the Vertex AI data storedata_stores.tool_name: The name of the tooldata_stores.description: The description of the Vertex AI data store